More filters, same broken process: how AI sourcing tools are making hiring worse

This is the third post of four in our hiring series. Part 1 talked about how we successfully ran the largest blind eng hiring experiment of all time. Part 2 talked about how we built an AI model for eng hiring that outperforms recruiters and LLMs.

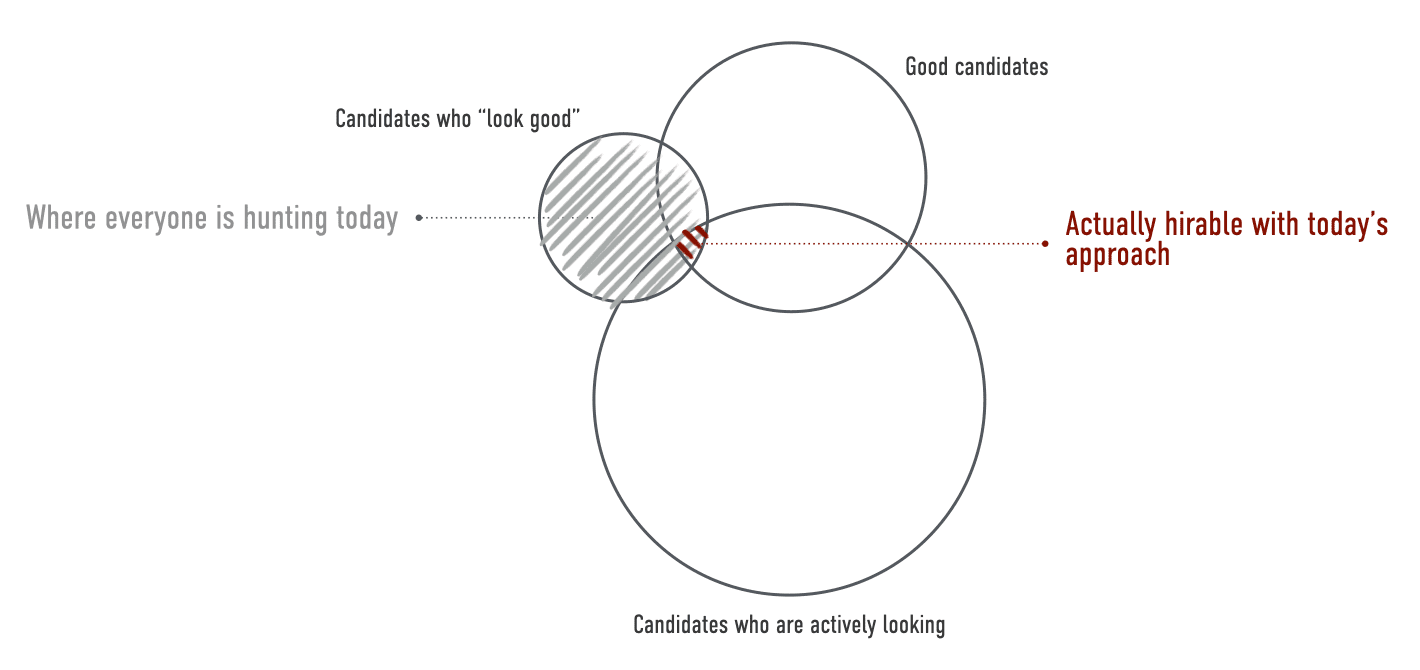

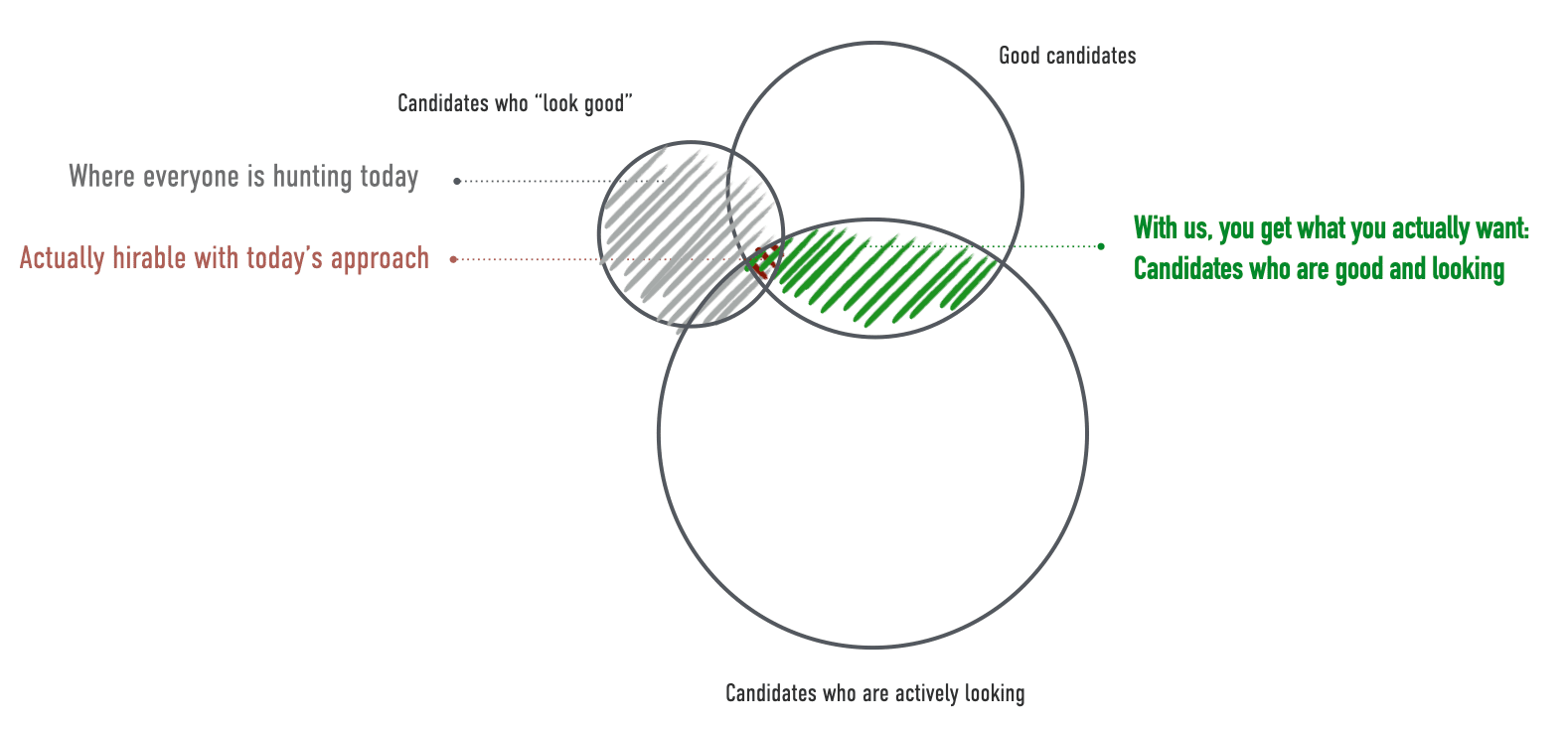

I’ve spent years trying to articulate everything that’s wrong with the status quo’s approach to technical recruiting. Here's everything that’s wrong with eng hiring today, in one diagram.

Essentially, everyone is chasing the same set of candidates who look good. Unfortunately, many of those candidates aren’t looking right now, and many aren’t actually good. (We have a lot of data showing that pedigree doesn’t really predict performance — check out the Appendix at the bottom of this post).

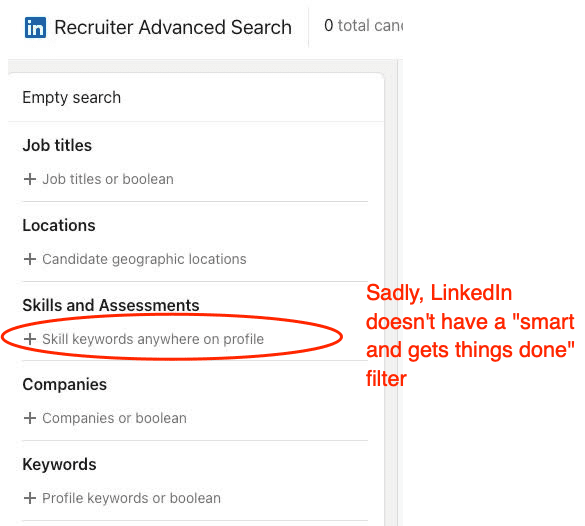

But, right now, there isn’t a good way to find good candidates. Despite the advent of new AI-based sourcing tools (more on that later in this post), LinkedIn still dominates sourcing, and it doesn’t let you search for “good.”

So, instead of being able to look directly for the right people, recruiters have to rely on proxies. Some obvious proxies might be where someone has worked before and usually turns into looking for people from FAANG and FAANG-adjacent companies (and maybe a few very high-profile top-tier startups as well).

As recruiters fail to hire enough people using just the usual FAANG proxies, hiring managers get increasingly frustrated. They blame recruiting and try to help, in their own way, by making their criteria even more specific.

Now, instead of just asking for FAANG candidates from top schools, you can ask for FAANG candidates who worked at a startup, where that startup was backed by some specific investor and where the candidate has previously owned a poodle (because some hiring manager told their recruiter that engineers with poodles write cleaner code).

And AI makes it possible to get that specific. Obviously the poodle example is a bit overblown, but it’s not too far from reality.

Enter the technical recruiting death spiral.

As long as companies continue to recruit like this, hiring is only going to get slower, harder, and more expensive. And a growing long tail of talented engineers who ARE smart, who CAN get things done, who will be easier to close, and who will stay longer at your company — but who don't meet the increasingly specific hiring criteria — are left out in the cold.

Today’s AI solutions are making hiring even worse

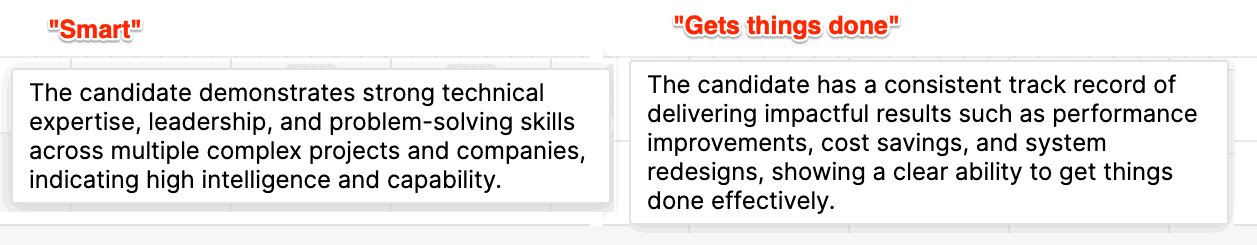

At the beginning of this post, we talked about the only two things that matter for hiring: (1) whether an engineer is good (or in the immortal words of Joel Spolsky, they’re “smart and get things done”) and (2) whether they’re looking (i.e., are open to new opportunities) right now.

How do AI tools fare against these criteria?

TL;DR Not well. But why?

First, recruiters are, not surprisingly, the buyers for recruiting tools. And what do recruiters want? Surprisingly, it’s not necessarily to make more (or better) hires. Because just like everyone else, recruiters’ main priority is to keep their jobs.

How does a recruiter keep their job? By bringing in the types of candidates that their manager tasked them with, even sometimes against their own better judgment. So, they ride the technical recruiting death spiral and focus on the candidates who fit the manager’s increasingly narrow criteria.

Given that recruiters are the buyers and are incentivized to look for increasingly specific types of candidates, if you’re an AI sourcing tool, and you want to compete with LinkedIn, damned right you’re going to listen to your customer and do things that make recruiters look good! This is what the market wants!

So the new generation of recruiting tools answer the call of the market, codify the recruiting death spiral, and with the help of AI make it spin faster than ever.

Probably the most salient example of the new wave of AI recruiting tools is Juicebox. Juicebox does AI-assisted sourcing. If there’s an incumbent to LinkedIn Recruiter, it’s them. They boast tens of millions in ARR and have recently raised a large Series B.

It’s unquestionably good that LinkedIn Recruiter, which has had a de facto monopoly on technical sourcing for decades, finally has a competitor. BUT, because Juicebox is listening to their buyers (recruiters) and following where the market leads them, it looks like they have fallen prey to all the same problems.

Before I say anything else, I want to be clear about something. I’ve spoken with one of the founders. He was smart and pleasant, and I have no reason to think that he or the rest of the team at Juicebox have any bad intentions.

And incidentally, there are plenty of other AI sourcing tools, but Juicebox has gotten the most traction by far, so in this piece, I’ll focus on them. That said, pretty much all the big picture criticisms that follow apply to any of the new wave of recruiting tools.

I played around with Juicebox quite a bit for this piece, with the intent of answering one important question: Can this tool avoid the technical recruiting death spiral by surfacing engineers who are good (rather than relying on proxies) and who are looking right now?

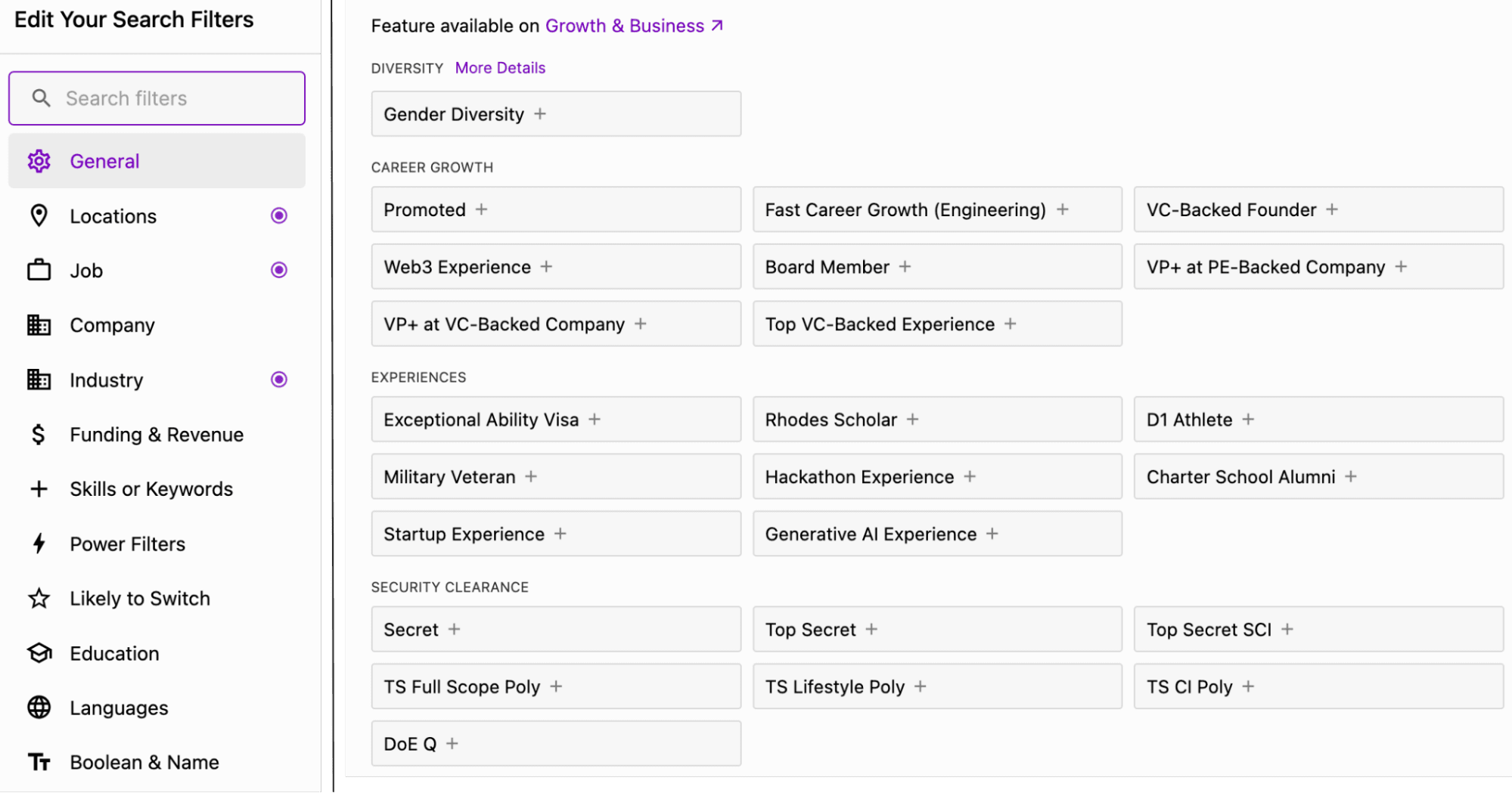

Here are Juicebox’s filters. They look similar to LinkedIn’s, but, in response to recruiter demand, they are way more granular. Despite this increased granularity, they are all still proxies. There still isn’t anything resembling a “smart and gets things done” filter.

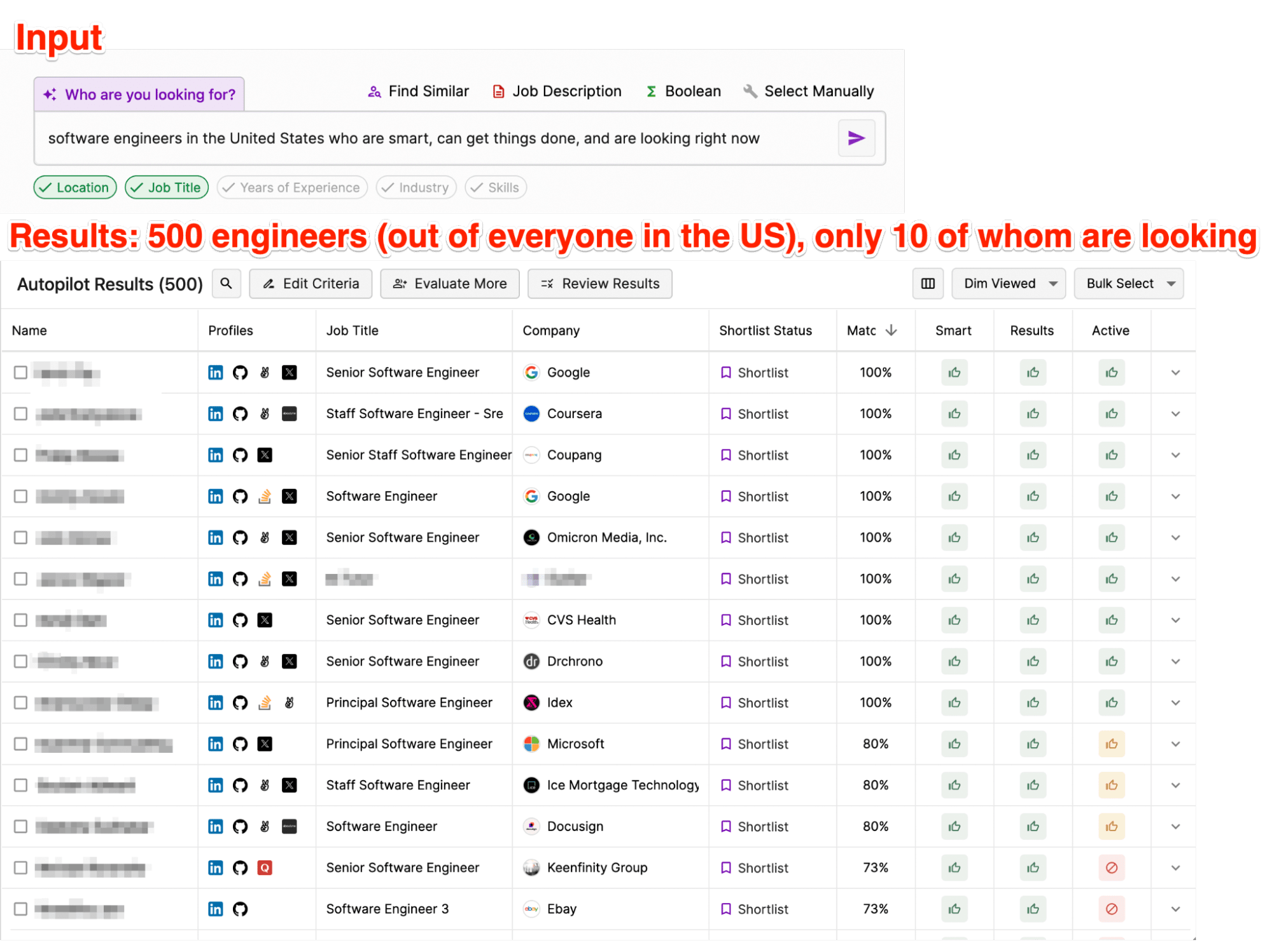

Juicebox also has a new feature called Autopilot, which lets you search for whatever you want. Now, I’m obviously not using the product as intended; usually Juicebox users probably list more specific criteria (things like years of experience, industry, and skills… the grayed out boxes above). But I wanted to see if I could look for “smart and gets things done.”

I did this on purpose, to make a point. Can AI search tools give us what we actually want: people who are good and looking right now? The answer appears to be “hell no”.

I got back just 500 profiles out of the entire corpus of millions of software engineers. Below are the first few (names and anything else identifying have been blurred out). Only 10 or so were marked as “actively looking”, which seems absurdly low.

As I said, the AI tried its best, and it provided reasons for why it thought certain candidates were smart and could get things done (which you can get by hovering over the thumb icons). Unfortunately, these reasons are a study in the superficial eloquence of LLMs. Without performance data, the AI can’t tell who’s good. So it picks some profiles and makes up some generic, plausible-sounding stuff.

Incidentally, I cross-referenced the results with interviewing.io’s data, and of the users we had performance data for, only about 10% of the list were top performers.

I know that most recruiters won’t be using Juicebox or other tools this way. They’ll be using Juicebox’s superpower: turbocharged specificity and way more filters than LinkedIn. Unfortunately, none of these filters can actually tell us if the candidate is good… or even if they’re looking now.

With tools like Juicebox, we get increasingly specific, sourcing takes longer and longer, candidates still fail interviews, we spend more and more time interviewing while overlooking people who are actually good, and we still don’t make the hires that we need. In other words, the technical recruiting death spiral, from earlier in this post.

Break the cycle

The only way out of the death spiral is to stop relying on proxies entirely. You need to know two things: is this person good, and are they looking? Everything else is noise.

At interviewing.io, we happen to have both of these signals.

By the way, you don't have to use us. If you have another data source that tells you which candidates are good and looking, great, use that. It'll make the industry better to have multiple sources of truth on this one.

But if you don't have one, consider using us. Why? We know who's good and looking.

We know who’s looking because they’re practicing on interviewing.io. No proxies and no guessing about layoffs or funding rounds or stock price. We just know who’s looking because engineers start practicing with us months before they do any real interviews.

And we also know who’s good because we know how they do in interviews. Simple as that. Past interview performance, in aggregate, is remarkably predictive of future interview performance.

About 10,000 engineers sign up for interviewing.io every month. We know who’s good and looking, and we can help you hire faster, cheaper, and more efficiently than ever before. In other words, you’ll be hiring from the green part of the Venn diagram instead of the red.

Do you want to be hiring from the green part rather than the red part? Try us out. You can use us as a source of excellent candidates and/or you can use our predictive model1 to help you surface the best people from your inbound applications. Just fill in this form for early access, and we’ll be in touch.

Footnotes:

Footnotes

-

Yes, we have AI as well. But it’s trained on anonymized data, not recruiter and hiring manager preferences. I’ll share more about it in an upcoming post. ↩

Related posts

Have interviews coming up? Study up on common questions and topics.

We know exactly what to do and say to get the company, title, and salary you want.

Interview prep and job hunting are chaos and pain. We can help. Really.