More filters, same broken process: how AI sourcing tools are making hiring worse

I've spent 15 years in technical recruiting, and I've spent most of that time trying to put into words everything that's wrong with how we hire engineers. Here it is.

Everyone is chasing the same candidates who look good on paper. Many of them aren't looking right now, and many of them aren't actually good. But there's no way to search for "good." LinkedIn doesn't have that filter. So recruiters rely on proxies. Where did someone work? FAANG? Top school? Specific VC-backed startup?

The new wave of AI sourcing tools was supposed to fix this. It didn't. The data about who's actually good and who's actually looking just doesn't exist in these tools. So instead of solving the problem, they let recruiters get way more specific about the wrong things. Now you can ask for a FAANG engineer who also worked at a startup backed by a specific investor and who previously owned a poodle (because some hiring manager told their recruiter that engineers with poodles write cleaner code).

The poodle thing is a joke. Sort of.

The result is what I call the technical recruiting death spiral. Criteria get narrower, sourcing takes longer, candidates still fail interviews, and a massive long tail of talented engineers who would be easier to close and stay longer get completely overlooked because they haven't owned a poodle.

In this post, I go deep on this death spiral and test one of the leading AI sourcing tools to see if it can actually find me good engineers. Spoiler: it can't.

We built an AI model for eng hiring that outperforms recruiters and LLMs

In the last post in our hiring series, I talked about how, for six years, we ran the largest blind eng hiring experiment in history and placed thousands of people at top-tier companies. 46% of candidates who got offers at these companies didn't have top schools or top companies on their resumes. Despite that, these candidates performed as well (or better than) their pedigreed counterparts, were 2X more likely to accept offers, and stayed at their companies 15% longer.

Of course, it's easy to say that you should hire non-traditional candidates. But how do you separate great ones from mediocre ones, when you can't look at brand names on their resumes for signal? The short answer is that it's really hard. We spent years figuring it out.

But, we now have a predictive model that outperforms both human recruiters and LLMs and can reliably identify strong candidates, regardless of how they look on paper, just from an (anonymize) LinkedIn profile. Not only can it spot diamonds in the rough, but it can also identify candidates who look good but aren't actually good.

For years, hiring has relied on pedigree and optics because outcomes data was effectively inaccessible (especially data for candidates who don't pass resume screens). We think we've fixed that.

For six years, we ran the largest blind eng hiring experiment of all time. Here’s what happened.

You’ve probably heard about the blind orchestra auditions described by Malcolm Gladwell in Outliers. We did the same thing with eng hiring.

With our blind approach, over six years, we placed thousands of engineers at FAANG and FAANG-adjacent companies and top-tier startups.

46% (almost half!) of those engineers didn’t have either a top school or a top company on their resume. In a normal (not blind) hiring process, these candidates wouldn’t even have gotten an interview.

Are recruiters better than a coin flip at judging resumes? Here's the data.

In partnership with the team at Learning Collider, we ran a study to see how good recruiters were at judging resumes.

We asked technical recruiters to review and make judgments about engineers’ resumes, just as they would in their current roles.

They answered two questions per resume:

- Would you interview this candidate?

- What is the likelihood this candidate will pass the technical interview?

We ended up with nearly 2,200 evaluations of over 1,000 resumes. We then compared those judgments to how those engineers performed in interviews on our platform. Here's what we learned.

The 3 things that diversity hiring initiatives get wrong

I’ve been hiring engineers in some capacity for the past decade. Five years ago I founded interviewing.io, a technical recruiting marketplace that provides engineers with anonymous mock interviews and then fast-tracks top performers—regardless of who they are or how they look on paper—at top companies. We’ve hosted close to 100K technical interviews on our platform and have helped thousands of engineers find jobs. For the last year or so, we’ve also been running a Fellowship program specifically for engineers from underrepresented backgrounds. That’s all to say that even though I have developed some strong opinions about “diversity hiring” initiatives, my opinions are based not on anecdotes but on cold, hard data. And the data points …

We looked at how a thousand college students performed in technical interviews to see if where they went to school mattered. It didn't.

interviewing.io is a platform where engineers practice technical interviewing anonymously. If things go well, they can unlock the ability to participate in real, still anonymous, interviews with top companies like Twitch, Lyft and more. Earlier this year, we launched an offering specifically for university students, with the intent of helping level the playing field right at the start of people’s careers. The sad truth is that with the state of college recruiting today, if you don’t attend one of very few top schools, your chances of interacting with companies on campus are slim. It’s not fair, and it sucks, but university recruiting is still dominated by career fairs. Companies pragmatically choose to visit the same …

If you care about diversity, don't just hire from the same five schools

EDIT: Our university hiring platform is now on Product Hunt!

If you’re a software engineer, you probably believe that, despite some glitches here and there, folks who have the technical chops can get hired as software engineers. We regularly hear stories about college dropouts, who, through hard work and sheer determination, bootstrapped themselves into millionaires. These stories appeal to our sense of wonder and our desire for fairness in the world, but the reality is very different. For many students looking for their first job, the odds of breaking into a top company are slim because they will likely never even have the chance to show their skills in an interview. For these students ...

LinkedIn endorsements are dumb. Here’s the data.

If you’re an engineer who’s been endorsed on LinkedIn for any number of languages/frameworks/skills, you’ve probably noticed that something isn’t quite right. Maybe they’re frameworks you’ve never touched or languages you haven’t used since freshman year of college. No matter the specifics, you’re probably at least a bit wary of the value of the LinkedIn endorsements feature. The internets, too, don’t disappoint in enumerating some absurd potential endorsements or in bemoaning the lack of relevance of said endorsements, even when they’re given in earnest. Having a gut feeling for this is one thing, but we were curious about whether we could actually come up with some numbers that showed how useless endorsements can be, and …

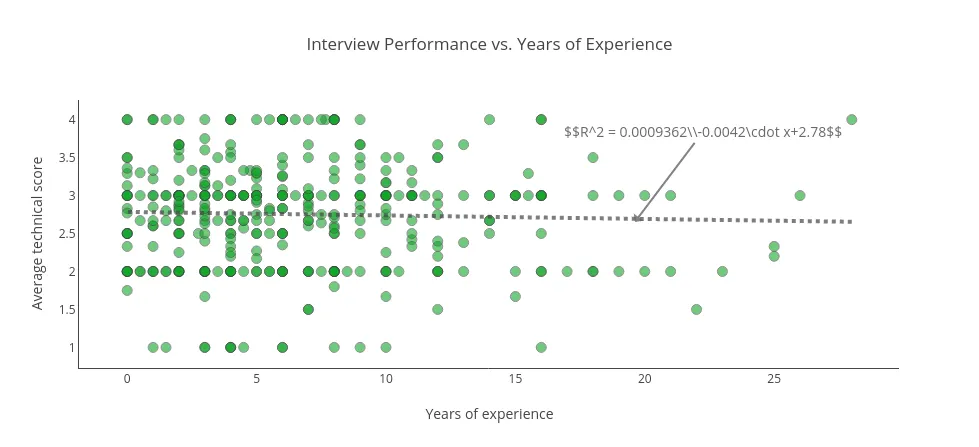

Lessons from 3,000 technical interviews… or how what you do after graduation matters way more than where you went to school

The first blog post I published that got any real attention was called “Lessons from a year’s worth of hiring data“. It was my attempt to understand what attributes of someone’s resume actually mattered for getting a software engineering job. Surprisingly, as it turned out, where someone went to school didn’t matter at all, and by far and away, the strongest signal came from the number of typos and grammatical errors on their resume. Since then, I’ve discovered (and written about) how useless resumes are, but ever since writing that first post, I’ve been itching to do something similar with interviewing.io’s data. For context, interviewing.io is a platform where people can practice technical interviewing anonymously …

Resumes suck. Here's the data.

About a year ago, after looking at the resumes of engineers we had interviewed at TrialPay in 2012, I learned that the strongest signal for whether someone would get an offer was the number of typos and grammatical errors on their resume. On the other hand, where people went to school, their GPA, and highest degree earned didn’t matter at all. These results were pretty unexpected, ran counter to how resumes were normally filtered, and left me scratching my head ...

Lessons from a year’s worth of hiring data

I ran technical recruiting at TrialPay for a year before going off to start my own agency. Because I used to be an engineer, one part of my job was conducting first-round technical interviews, and between January 2012 and January 2013, I interviewed roughly 300 people for our back-end/full-stack engineer position.

TrialPay was awesome and gave me ...

Most popular posts

We know exactly what to do and say to get the company, title, and salary you want.

Interview prep and job hunting are chaos and pain. We can help. Really.