Should you outsource your technical interviews? And should you use us or Karat?

We’ve recently entered the outsourced interview business (which has historically been dominated by the good people at Karat).

The pieces had been there for a while. Meta had used our tooling to conduct their own interviews, several top-tier VCS had used us to help them vet their talent pool, companies routinely use us to help their engineers prepare for an acquisition, and, most importantly, hundreds of thousands of engineers have used us to practice for their own interviews over the last decade.

So, we're finally doing it! Our big differentiator is interviewer background and experience. Our interviewers are senior, staff, and principal engineers from FAANG, FAANG+, and the frontier AI labs. Only the top 5% of interviewers from our mock interview pool qualify to conduct interviews on behalf of customers.

In this post, we talk about whether you should outsource interviewing in the first place, and if so, whether Karat or interviewing.io is the right choice for you.

How to spot great non-traditional candidates (when you can't rely on brands)

If you've been in eng hiring for any length of time, you've probably noticed that the candidates who look best on paper don't always turn out to be the best engineers, and some of the strongest people you've ever worked with often took wildly unconventional paths to get there.

Despite that, most hiring pipelines are still built to filter for pedigree and brands. This insistence on brand names creates a massive talent arbitrage opportunity for recruiting teams who are willing to look beyond them. As everyone's AI-powered funnels converge on the same narrow set of profiles, a growing long tail of talented engineers — people who are smart, who can get things done, who will be easier to close and stay longer — are being systematically overlooked. Anyone who figures out how to identify these people is ironically going to win the talent war.

We analyzed ten years of hiring data and looked through our roster of non-traditional top performers and see what their resumes had in common. Here are the exact signals you need to look for to identify diamonds in the rough.

More filters, same broken process: how AI sourcing tools are making hiring worse

I've spent 15 years in technical recruiting, and I've spent most of that time trying to put into words everything that's wrong with how we hire engineers. Here it is.

Everyone is chasing the same candidates who look good on paper. Many of them aren't looking right now, and many of them aren't actually good. But there's no way to search for "good." LinkedIn doesn't have that filter. So recruiters rely on proxies. Where did someone work? FAANG? Top school? Specific VC-backed startup?

The new wave of AI sourcing tools was supposed to fix this. It didn't. The data about who's actually good and who's actually looking just doesn't exist in these tools. So instead of solving the problem, they let recruiters get way more specific about the wrong things. Now you can ask for a FAANG engineer who also worked at a startup backed by a specific investor and who previously owned a poodle (because some hiring manager told their recruiter that engineers with poodles write cleaner code).

The poodle thing is a joke. Sort of.

The result is what I call the technical recruiting death spiral. Criteria get narrower, sourcing takes longer, candidates still fail interviews, and a massive long tail of talented engineers who would be easier to close and stay longer get completely overlooked because they haven't owned a poodle.

In this post, I go deep on this death spiral and test one of the leading AI sourcing tools to see if it can actually find me good engineers. Spoiler: it can't.

We built an AI model for eng hiring that outperforms recruiters and LLMs

In the last post in our hiring series, I talked about how, for six years, we ran the largest blind eng hiring experiment in history and placed thousands of people at top-tier companies. 46% of candidates who got offers at these companies didn't have top schools or top companies on their resumes. Despite that, these candidates performed as well (or better than) their pedigreed counterparts, were 2X more likely to accept offers, and stayed at their companies 15% longer.

Of course, it's easy to say that you should hire non-traditional candidates. But how do you separate great ones from mediocre ones, when you can't look at brand names on their resumes for signal? The short answer is that it's really hard. We spent years figuring it out.

But, we now have a predictive model that outperforms both human recruiters and LLMs and can reliably identify strong candidates, regardless of how they look on paper, just from an (anonymize) LinkedIn profile. Not only can it spot diamonds in the rough, but it can also identify candidates who look good but aren't actually good.

For years, hiring has relied on pedigree and optics because outcomes data was effectively inaccessible (especially data for candidates who don't pass resume screens). We think we've fixed that.

For six years, we ran the largest blind eng hiring experiment of all time. Here’s what happened.

You’ve probably heard about the blind orchestra auditions described by Malcolm Gladwell in Outliers. We did the same thing with eng hiring.

With our blind approach, over six years, we placed thousands of engineers at FAANG and FAANG-adjacent companies and top-tier startups.

46% (almost half!) of those engineers didn’t have either a top school or a top company on their resume. In a normal (not blind) hiring process, these candidates wouldn’t even have gotten an interview.

Why engineers don’t like takehome assignments – and how companies can fix them

We surveyed almost 700 of our users about their experiences with take-homes and interviewed a handful more for deeper insights. We learned a lot—mostly about candidates' poor experiences and negative feelings toward take-homes. They take a lot of time. They don’t respect candidates’ time. Candidates often get no feedback. And candidates are almost never compensated. Really, it's all about value asymmetry.

The good news? Turns out there are some pretty simple things companies can do to vastly improve their take-home assignments

The other half, or why interviewers aren't always great and how to make them better

It is high time we start talking about interviewing. I know it seems like we are talking about interviewing all the time, but we are usually talking about only one half of the equation: How to be a good candidate. What about the other half? What about the interviewer?

In the last decade, there has been an explosion of attention for candidates and how to improve their interview performance. This stands in stark contrast to the preparation of the interviewer. If you are lucky, your interviewer might have gotten a two hour class on how to ask only bona fide work related questions and has sat through two shadow interviews. Maybe they have even done a few interviews! Consequently, there is a lot of bad interviewing being done. That needs to change.

Why AI can’t do hiring

The recent exciting and somewhat horrifying inflection point in AI capability tipped me into writing this blog post.

I simply don't believe that AI can do hiring. My argument isn't about bias (though bias is a real problem) or that it's technologically impossible. It's just that the training data simply isn't available.

Most people believe that if you can somehow combine what's available on LinkedIn, GitHub, and the social graph (who follows whom on Twitter etc.), you'll be able to find the good engineers who are actively looking and also figure out what they want. This is wrong. None of those 3 sources are actually useful. At the end of the day, you can’t use AI for hiring if you don’t have the data. And if you have the data, then you don’t strictly need AI.

We have the best technical interviewers on the market. Here's how we do it.

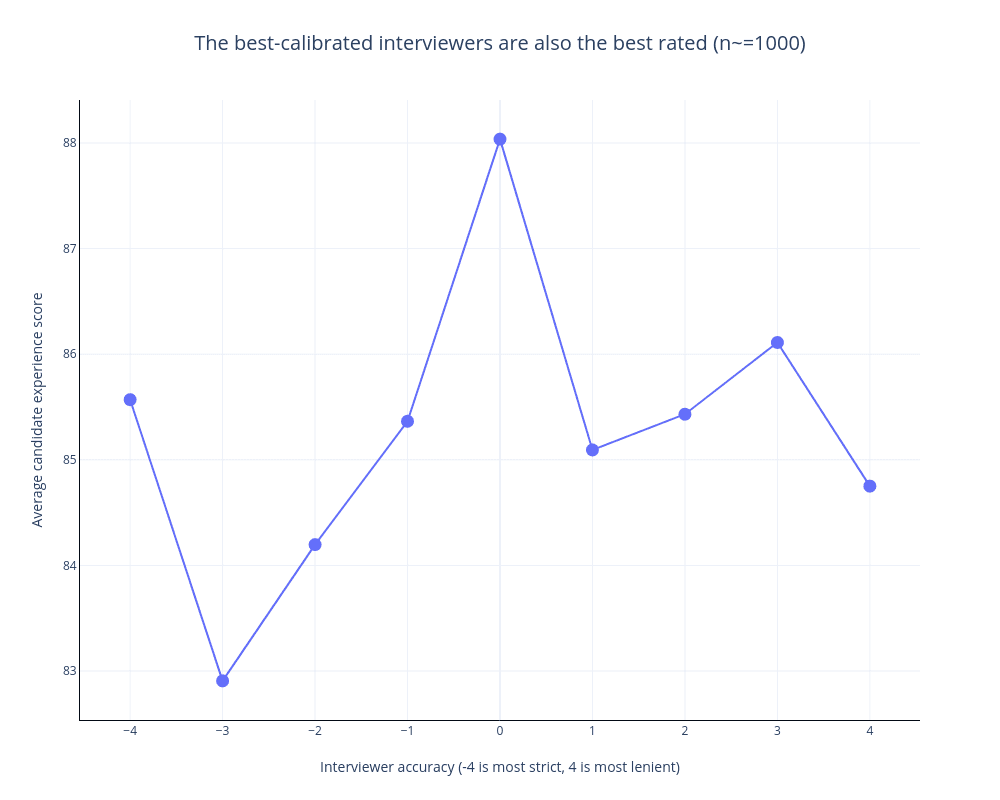

interviewing.io is an anonymous mock interview platform and eng hiring marketplace. We make money in two ways: engineers pay us for mock interviews, and employers pay us for access to the best performers. This means that we live and die by the quality of our interviewers in a way that no single employer does – if we don’t have really well-calibrated interviewers, who also create great candidate experience, we don’t get paid.

In a recent post, we shared how, over time, we came up with two metrics that, together, tell a complete and compelling story about interviewer quality: the candidate experience metric and the calibration metric. In this post, we’ll talk about how to apply our learnings about interviewer quality to your own process. We’ve made a bunch of mistakes so you don’t have to! It boils down to choosing the right people, tracking those 2 metrics diligently, rewarding good behavior, and committing to providing feedback to your candidates.

Why giving feedback (whether it’s good or bad) will help you hire

Giving feedback will not only make candidates you want today more likely to join your team, but it’s also crucial to hiring the candidates you might want down the road. Technical interview outcomes are erratic, and according to our data, only about 25% of candidates perform consistently from interview to interview.

Our business depends on having the best interviewers, so we built an interviewer rating system. And you can too.

interviewing.io is an anonymous mock interview platform and eng hiring marketplace. Engineers use us for mock interviews, and we use the data from those interviews to surface top performers, in a much fairer and more predictive way than a resume. If you’re a top performer on interviewing.io, we fast-track you at the world’s best companies.

We make money in two ways: engineers pay us for mock interviews, and employers pay us for access to the best performers. To keep our engineer customers happy, we have to make sure that our interviewers deliver value to them by conducting realistic mock interviews and giving useful, actionable feedback afterwards. To keep our employer customers happy, we have to make sure that the engineers we send them are way better than the ones they’re getting without us. Otherwise, it’s just not worth it for them.

This means that we live and die by the quality of our interviewers, in a way that no single employer does, no matter how much they say they care about people analytics or interviewer metrics or training. If we don’t have really well-calibrated interviewers, who also create great candidate experience, we don’t get paid.

In this post, we’ll explain exactly how we compute and use these metrics to get the best work out of our interviewers.

Hamtips, or why I still run the Technical Phone Screen as the Hiring Manager

“Hamtips” stands for “Hiring Manager Technical Phone Screen.” This combines two calls: the Technical Phone Screen (TPS), which is a coding exercise that usually happens before the onsite, and the HMS call, which is a call with the Hiring Manager. By combining these two steps you shorten the intro-to-offer by ~1 week and reduce candidate dropoff by 5-10%. It’s also a lot less work for recruiters playing scheduling battleship. Finally, Hiring Managers will, on average, be better at selling working at the company – it’s kind of their job.

How to write (actually) good job descriptions

When you start writing a job description, the first question you should ask yourself is, Am I trying to attract the right people, or am I trying to keep the wrong people out? Then, once you answer it, write for that audience deliberately, because it’s really hard to write for both…

The technical interview practice gap, and how it keeps underrepresented groups out of software engineering

I’ve been hiring engineers in some capacity for the past decade, and five years ago I founded interviewing.io, a technical recruiting marketplace that provides engineers with anonymous mock interviews and then fast-tracks top performers—regardless of who they are or how they look on paper—at top companies. We’ve hosted close to 100K technical interviews on our platform and have helped thousands of engineers find jobs. Since last year, we’ve also been running a Fellowship program specifically for engineers from underrepresented backgrounds. All that is to say that even though I have strong opinions about “diversity hiring” initiatives, I’ve acquired them the honest way, through laboratory experience.

Technical phone screen superforecasters

“The new VP wants us to double engineering’s headcount in the next six months. If we have a chance in hell to hit the hiring target, you seriously need to reconsider how fussy you’ve become.”

It’s never good to have a recruiter ask engineers to lower their hiring bar, but he had a point. It can take upwards of 100 engineering hours to hire a single candidate, and we had over 50 engineers to hire. Even with the majority of the team chipping in, engineers would often spend multiple hours a week in interviews. Folks began to complain about interview burnout. Also, fewer people were actually getting offers; the onsite pass rate had fallen by almost a third, from ~40% to under 30%. This meant we needed even more interviews for every hire. Visnu and I were early engineers bothered most by the state of our hiring process. We dug in. Within a few months, the onsite pass rate went back up, and interviewing burnout receded. We didn’t lower the hiring bar, though. There was a better way.

No engineer has ever sued a company because of constructive post-interview feedback. So why don’t employers do it?

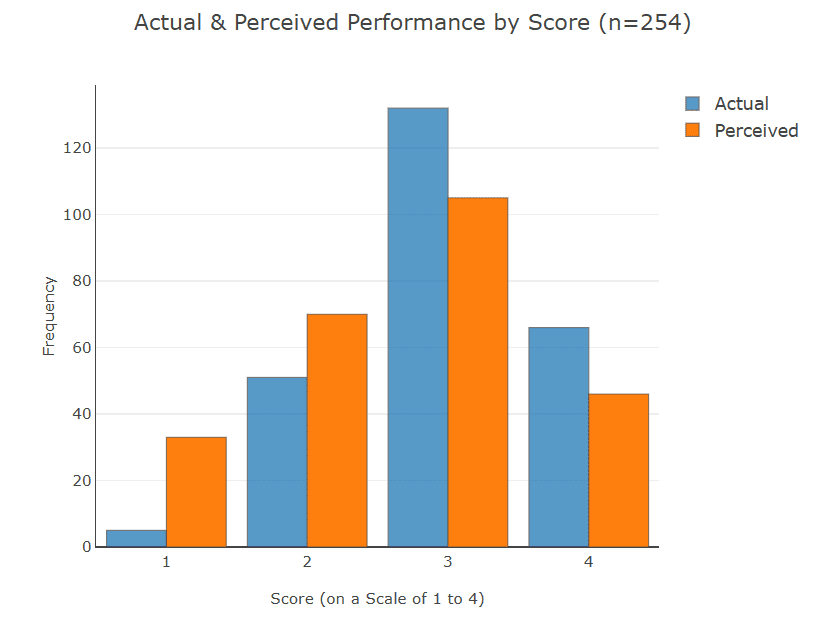

One of the things that sucks most about technical interviews is that they’re a black box—candidates (usually) get told whether they made it to the next round, but they’re rarely told why they got the outcome that they did. Lack of feedback, or feedback that doesn’t come right away, isn’t just frustrating to candidates. It’s bad for business. We did a whole study on this. It turns out that candidates chronically underrate and overrate their technical interview performance, like so: Where this finding starts to get actionable is that there’s a statistically significant relationship between whether people think they did well in an interview and whether they’d want to work with you. In other words, …

3 exercises to craft the kind of employer brand that actually makes engineers want to work for you

If I’m honest, I’ve wanted to write something about employer brand for a long time. One of the things that really gets my goat is when companies build employer brand by over-indexing on banalities (“look we have a ping pong table!”, “look we’re a startup so you’ll have a huge impact”, etc.) instead of focusing on the narratives that make them special. Hiring engineers is really hard. It’s hard for tech giants, and it’s hard for small companies… but it’s especially hard for small companies people haven’t quite heard of, and they can use all the help they can get because talking about impact and ping pong tables just doesn’t cut it anymore. At interviewing.io, …

You probably don’t factor in engineering time when calculating cost per hire. Here’s why you really should.

Whether you’re a recruiter yourself or an engineer who’s involved in hiring, you’ve probably heard of the following two recruiting-related metrics: time to hire and cost per hire. Indeed, these are THE two metrics that any self-respecting recruiting team will track. Time to hire is important because it lets you plan — if a given role has historically taken 3 months to fill, you’re going to act differently when you need to fill it again than if it takes 2 weeks. And, traditionally, cost per hire has been a planning tool as well — if you’re setting recruiting budgets for next year and have a headcount in mind, seeing what recruiting spent last year is …

What do the best interviewers have in common? We looked at thousands of real interviews to find out.

At interviewing.io, we’ve analyzed and written at some depth about what makes for a good interview from the perspective of an interviewee. However, despite the inherent power imbalance, interviewing is a two-way street. I wrote a while ago about how, in this market, recruiting isn’t about vetting as much as it is about selling, and not engaging candidates in the course of talking to them for an hour is a woefully missed opportunity. But, just like solving interview questions is a learned skill that takes time and practice, so, too, is the other side of the table. Being a good interviewer takes time and effort and a fundamental willingness to get out of autopilot and …

People are still bad at gauging their own interview performance. Here’s the data.

interviewing.io is an anonymous technical interviewing platform. We started it because resumes suck and because we believe that anyone, regardless of how they look on paper, should have the opportunity to prove their mettle. In the past few months, we’ve amassed over 600 technical interviews along with their associated data and metadata. Interview questions tend to fall into the category of what you’d encounter at a phone screen for a back-end software engineering role at a top company, and interviewers typically come from a mix of larger companies like Google, Facebook, and Twitter, as well as engineering-focused startups like Asana, Mattermark, KeepSafe, and more. Over the course of the next few posts, we’ll be sharing some …

A founder’s guide to making your first recruiting hire

Recently, a number of founder friends have asked me about how to approach their first recruiting hire, and I’ve found myself repeating the same stuff over and over again. Below are some of my most salient thoughts on the subject. Note that I’ll be talking a lot about engineering hiring because that’s what I know, but I expect a lot of this applies to other fields as well, especially ones where the demand for labor outstrips supply. Don’t get caught up by flashy employment history; hustle trumps brands At first glance, hiring someone who’s done recruiting for highly successful tech giants seems like a no-brainer. Google and Facebook are good at hiring great engineers, right? …

Engineers can't gauge their own interview performance. And that makes them harder to hire.

interviewing.io is an anonymous technical interviewing platform. We started it because resumes suck and because we believe that anyone regardless of how they look on paper, should have the opportunity to prove their mettle. In the past few months, we’ve amassed over 600 technical interviews along with their associated data and metadata. Interview questions tend to fall into the category of what you’d encounter at a phone screen for a back-end software engineering role at a top company, and interviewers typically come from a mix...

Most popular posts

We know exactly what to do and say to get the company, title, and salary you want.

Interview prep and job hunting are chaos and pain. We can help. Really.