The 3 things that diversity hiring initiatives get wrong

I’ve been hiring engineers in some capacity for the past decade. Five years ago I founded interviewing.io, a technical recruiting marketplace that provides engineers with anonymous mock interviews and then fast-tracks top performers—regardless of who they are or how they look on paper—at top companies.

We’ve hosted close to 100K technical interviews on our platform and have helped thousands of engineers find jobs. For the last year or so, we’ve also been running a Fellowship program specifically for engineers from underrepresented backgrounds.

That’s all to say that even though I have developed some strong opinions about “diversity hiring” initiatives, my opinions are based not on anecdotes but on cold, hard data. And the data points to the following 3 problems with these initiatives:

- Over-indexing on unconscious bias, which ignores the much bigger problem of discrimination based on pedigree (i.e., conscious bias): Too many organizations invest in resume blinding and other attempts to mitigate unconscious bias at the top of the funnel. But they continue to ignore the much more problematic, ever-present conscious bias against engineers who didn’t attend a top school or work at a top company, which is especially troubling given that there are many more underrepresented engineers who didn’t attend top schools.

- Focusing on bias in the middle of the funnel rather than the top: The top of the funnel is orders of magnitude larger than the middle. By the time you get to the middle, you’ve weeded out most of your candidates from underrepresented backgrounds, and the damage has been done. Investing in bias training during interviews, therefore, gives you diminishing returns and is more of an exercise in optics than an attempt to fix anything.

- Ignoring the technical interview practice gap: Technical interviews are a learned skill, but access to practice is not equitably distributed. As a result, marginalized groups often underperform—not because they’re worse at engineering but simply because they haven’t had access to the same level of preparation.

All three of these problems are somewhat subtle and require a bit of explanation, but until they’re addressed, we’re not going to make meaningful progress toward equitable representation in software engineering.

Problem 1: Over-indexing on unconscious bias at the top of the funnel (while ignoring conscious bias)

First off, what is the top of the funnel? In the case of hiring, this refers to the stage where we decide whether we want to move a candidate into our process. If a candidate applies online (inbound), this is the step where we look at their resume and decide whether or not to move forward. And if we’re actively trying to bring candidates into our process, this is when we look at their public profile (usually LinkedIn) and decide if we want to reach out to them (outbound).

Whether the candidate is inbound or outbound, we have limited information available to help us make a decision. The information we have available typically consists of a resume (or a resume-like profile) and contains biographical info like name, where they went to school and their graduation date, and their work history. From these few bits of data, we can glean—intentionally or not—a candidate’s gender and sometimes their race, their age, and their pedigree (i.e., did they attend an elite school and/or work at a top company?).

Unconscious vs. conscious bias

In this context, unconscious bias refers to making quick judgments, often without being aware of it, against someone based on the biographical info and other traits gleaned from their resume. In the context of engineering hiring, we tend to focus specifically on reducing bias around attributes like gender and race (though of course discrimination based on other traits like sexual orientation, age, beliefs, disability, etc., is also unacceptable).

Conscious bias, on the other hand, is the very intentional decision not to move forward with candidates who lack pedigree, i.e., those who didn’t attend an elite computer science school or work at a company with a well-established brand. **Unlike unconscious bias, organizations don’t typically condemn this, and more often than not, conscious bias is actually codified into the hiring process, with explicit rules for the recruiting team that say something like, “**These are the schools we hire from, and these are the companies we look for on a resume. If a candidate doesn’t have either, let’s not move forward.”

Either way, at the top of the funnel, before we ever interact with a candidate, we must decide if we want to bring them in for an interview based on very limited information, and that’s where things get complicated.

Why anonymizing resumes isn’t enough

At the top of the funnel, the canonical way to mitigate unconscious bias is to anonymize resumes. The idea is that if we can remove all signals that might help us uncover someone’s race and gender, then we, by definition, can’t be biased, right?

Here’s the problem. Though anonymizing resumes isn’t inherently bad, it’s not nearly enough. In a nutshell, as long as we keep over-indexing on top schools and top companies, it’s not mathematically possible to hit our goals, no matter how much unconscious bias mitigation and resume anonymization we perform.

It’s a fact: there are far more women and people of color who didn’t attend one of a handful of well-known universities than there are who did. To make matters worse, filtering based on pedigree doesn’t really work! As a result, the pedigree-based tradeoffs we currently make (in the name of keeping the bar high) exclude people from underrepresented backgrounds… without actually getting us the best candidates.

Over-indexing on resumes and pedigree

Let’s talk about resumes first. The resume is a demonstrably terrible way to judge whether someone is a good engineer. People often talk about how, in the spirit of fairness, it’s important to consider non-pedigreed candidates, but there’s a tacit agreement that pedigree is useful and that we’re doing something charitable by broadening the pool. As a result, we accept the premise that we’re going to be either pragmatic or fair, but not both. However, everything I’ve learned from being in this business more than a decade has shown me that pitting fairness against pedigree creates a false dichotomy.

In other words, resumes inherently don’t carry enough signal about a candidate’s engineering ability to be useful. I’ve written extensively (and angrily) on this subject, and below I’ve linked the TL;DRs for 3 studies that all point to resumes being largely useless:

- The number of typos and grammatical errors on someone’s resume matters much more than where they worked or went to school (the latter, in fact, didn’t matter at all)

- Neither recruiters nor engineers can figure out who the strong candidates are based on their resumes, and, more importantly, they all disagree with each other about what a good candidate looks like

- Data from interviewing.io (based on thousands of interviews) has shown that where someone went to school has no bearing on their performance in technical interviews

Simply put, even if you don’t care one iota about inclusion and just pragmatically want to hire the best candidates with the fewest amount of interviews, you should STILL stop focusing on resumes and pedigree.

So we’re making decisions based on an incorrect heuristic and bad data. That’s not great, but what does it have to do with diversity? Here’s the thing. If you make hiring decisions based on pedigree, the numbers simply aren’t there, and it’s mathematically impossible to reach your hiring goals.

Here is some napkin math. Every year, about 60,000 students graduate in the US with a computer science degree. If you only hire from top schools, your pool narrows to about 6,000. Of those, about 1,500 are women and fewer than 1,000 are people of color. With the U.S. Bureau of Labor Statistics (BLS) expecting nearly 140,000 new engineering jobs over the 2016–26 decade, it’s laughable to believe that any given company will be able to meet its diversity goals by hiring this way.

What happens when you mitigate conscious bias

But what happens if you look beyond elite schools and start mitigating conscious bias? That’s when you start to have a real shot. Below is a model we built to capture the size of the candidate pool (for gender, specifically) that shows what will happen if we keep hiring the way we’re hiring now vs. expanding our pool beyond elite schools and focusing on what people can actually do, instead of how they look on paper.

You can read more about how we built this model, but we looked at two alternative sources of candidates: MOOCs and bootcamps. In 2015 alone, over 35 million people signed up for at least one MOOC course, and in 2018 MOOCs collectively had over 100M students.

Because they’re cheaper and much faster than a four-year program, bootcamps seem like a rational choice when compared to the price of attending a top university. Since 2013, bootcamp enrollment has grown 9X, with a total of 20,316 graduates in 2018. Though these numbers represent enrollment across all genders, and the raw number of grads lags behind CS programs, the portion of women graduating from bootcamps is also on the rise and graduation from online programs has actually reached gender parity (as compared to only 20% in traditional CS programs).

But, even if lower-tier schools and alternative programs have their fair share of talent, how do we surface the most qualified candidates in the absence of the signals we’re used to? Good news. In this brave new world, where we have the technology to write code together remotely, and where we can collect data and reason about it, technology has the power to free us from relying on proxies.

At interviewing.io, we make it possible to move away from proxies by providing engineers with hyperrealistic, rigorous mock interviews and connecting top performers with employers, regardless of how the candidates look on paper.

Or if you don’t use us, there’s a slew of asynchronous coding assessments like CodeSignal, HackerRank, and Triplebyte, that can help you filter down your candidates before you invest precious engineering time into interviewing them.

So, despite the numbers and the bevy of available solutions, why do we still latch on to mitigating unconscious bias? Sadly, it’s easier. Removing names from a resume is eminently doable. Redefining credentialing in software engineering is really hard (I know, the interviewing.io team and I have been at this for over five years). But unless we can talk openly about this problem and stop pretending that unconscious bias mitigation will solve our problems, we can’t make meaningful progress toward equitable representation.

So far, we’ve just talked about bias at the top of the funnel. What about the bias we encounter when, instead of looking at a piece of paper, we’re interacting with another human, especially a human who doesn’t look like us?

Problem 2: Focusing on bias in the middle of the funnel rather than the top

The second problem I’ve observed occurs during the middle of the funnel, once candidates have made it through the door. Unconscious bias training tries to make us better at interacting with (and avoiding making judgments about) people who don’t look like us. It’s a well-intentioned attempt, but sadly it’s been shown not to work, and it can even be responsible for people digging their heels in on the prejudices they hold.

Most importantly, and this is perhaps the more subtle point, by the time a candidate gets to an interviewer, numerically speaking, it’s too late—you’ve already filtered out most of the candidates who would have moved your numbers, and any effort you put in at this step in the funnel gives you diminishing returns.

Put another way, there are often 100X the number of candidates at the top of the funnel than at the next stage. Most get cut before they ever talk to a human, simply based on their resumes. Despite that, employers are investing in training interviewers, which unfortunately is only relevant at a stage that most of the non-traditional talent won’t reach. Any gains we can make in the middle of the funnel are mathematically negligible compared to the gains we can make at the top (where, as you saw in the previous section, we persist in our conscious bias and call it a best practice).

Especially when you have limited resources to mitigate bias in your process, and as more and more of your team fall victim to diversity fatigue, investing so much effort into a part of the funnel that has a very real cap on its ROI makes no sense—even less so given that the thing you’re doing doesn’t even work.

Problem 3: Ignoring the technical interview practice gap

Of all the problems with diversity hiring, this one is the most subtle and perhaps the most damaging. Because it’s such an important (and tricky) topic that requires going in depth to fully understand, I encourage you to read the detailed post that I wrote last week focusing just on this topic. .

Attending an elite computer science institution (like MIT, where I went) provides students with a number of benefits, but perhaps the most significant is boundless access to technical interview practice.

MIT offered a multi-week course dedicated to passing technical interviews, and I got support from my peers who were interviewing at FAANG for internships and new grad positions. This allowed us to practice with each other, share our successes and failures, and recognize just how much of technical interviewing is a numbers game. And we learned that bombing a Google interview did not mean that you weren’t meant to be an engineer. It just meant that you needed to work some more problems, do more mock interviews, and try again at Facebook.

But let’s put the anecdotal experience aside and examine the data that we at interviewing.io have collected. We’ve hosted close to 100K interviews on our platform, which has taught us two things about the technical interview: 1) like standardized testing, it’s a learned skill, and 2) unlike standardized testing, interview results are not consistent or repeatable—the same candidate can ace one interview and fail another one the same day.

The importance of interview practice

In a recent study, we looked at how people who got jobs at FAANG performed in practice vs. those who did not. We discovered that technical ability did not obviously associate with interview success, but the number of practice interviews people completed (either on interviewing.io or elsewhere) did. Surprisingly, no other factors we included in our model (seniority, gender, degree, etc.) mattered at all.

Secondly, technical interview performance from interview to interview is fairly inconsistent, even among strong candidates. (Notably, consistency appears to have nothing to do with seniority, pedigree, or anything else.) In fact, only about 20% of interviewees perform consistently from interview to interview. Why does this matter? Once you’ve done a few traditional technical interviews, you learn to account for and accept the volatility and lack of determinism in the process. If you happen to have the benefit of speaking with friends who’ve also been through it, it only gets easier. But what if you don’t?

How the interview practice gap hurts underrepresented groups the most

In an earlier post, we wrote about how women quit interview practice 7 times more often than men after just one bad interview. Unfortunately, this is likely affecting other underrepresented and underserved groups as well.

This is a broken process for everyone, but the flaws within the system hit these groups the hardest—and simply because they haven’t had the opportunity to internalize exactly how much of technical interviewing is a game.

So what does this have to do with the practice gap? The key takeaway from our research has shown that there is a meaningful bump in performance (almost 2X as likely to pass!) for candidates who have completed at least 5 practice interviews. This isn’t a lot of interviews for someone who’s actively practicing and knows how the interview prep game works. But it’s a far cry from what companies who are looking to boost their diversity numbers offer their candidates, and it’s equally far from what bootcamps, an increasingly important source of candidates from underrepresented backgrounds, provide their students.

Why are bootcamps, universities, and employers all falling short and exacerbating the practice gap? With employers and bootcamps, the teams responsible for facilitating interview info sessions or mock interviews typically have non-technical backgrounds and lack a good grasp of the significant differences between preparing for behavioral interviews and technical ones.

Preparing for technical interviews (and other analytical, domain-specific interviews) is more similar to studying for a math test than rehearsing how to present yourself and tell your story. But until you’ve done it, you won’t really get it.

The worst effect of the practice gap occurs when companies simply walk away from their expanded recruiting efforts, with the mistaken belief that they should return to recruiting exclusively from elite institutions. Or worse, they sometimes have their unconscious biases about race and/or gender confirmed, when the actual problem isn’t the candidates but their lack of access to practice.

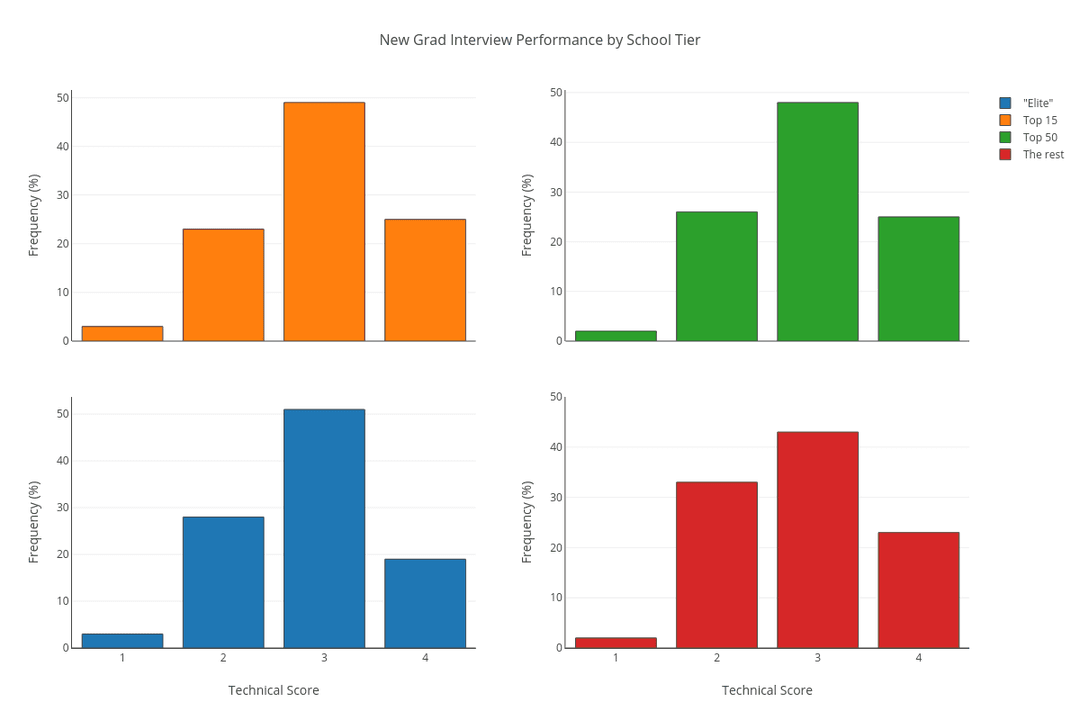

In fact, a few years ago, we ran a study where we looked at interview performance by school tier. For students who were actively and regularly practicing, there was no difference between elite schools and non-elite schools!

How to actually increase representation

So far I’ve outlined my three main problems with diversity hiring initiatives—but what’s the solution? A few things:

Stop over-indexing on resumes

In this brave new world, where we have the technology to write code together remotely, and where we can collect data and reason about it, technology has the power to free us from relying on proxies, so that we can look at each individual as an indicative, unique bundle of performance-based data points. At interviewing.io, we make it possible to move away from proxies by looking at each interviewee as a collection of data points that tell a story, rather than a largely signal-less document a recruiter looks at for 10 seconds and then makes a largely arbitrary decision before moving on to the next candidate.

Of course, this post lives on our blog, so I’ll take a moment to plug what we do. In a world where there’s a growing credentialing gap and where it’s really hard to figure out how to separate a mediocre non-traditional candidate from a stellar one, we can help. interviewing.io helps companies find and hire engineers based on ability, not pedigree. We give out free mock interviews to engineers, and we use the data from these interviews to identify top performers, independently of how they look on paper. Those top performers then get to interview anonymously with employers on our platform (we’ve hired for Lyft, Uber, Dropbox, Quora, and many other great, high-bar companies). And this system works. Not only are our candidates’ conversion rates 3X the industry standard (about 70% of our candidates ace their phone screens, as compared to 20-25% in a typical, high-performing funnel), about 40% of the hires made by top companies on our platform have come from non-traditional backgrounds. Because of our completely anonymous, skills-first approach, we’ve seen an interesting phenomenon happen time and time again: when an engineer unmasks at the end of a successful interview, the company in question realizes that the student who just aced their phone screen was one whose resume was sitting at the bottom of the pile all along (we recently had someone get hired after having been rejected by that same company 3 times based on his resume!).

Frankly, think of how much time and money you’re wasting competing for only a small pool of superficially qualified candidates when you could be hiring overlooked talent that’s actually qualified. Your CFO will be happier, and so will your engineers. Look, whether you use us or something else, there’s a slew of tech-enabled solutions that are redefining credentialing in engineering, from asynchronous coding assessments like CodeSignal or HackerRank to solutions that help you vet your inbound candidate pool, like Karat.

And using these new tools isn’t just paying lip service to a long-suffering D&I initiative. It gets you the candidates that everyone in the world isn’t chasing without compromising on quality, helps you make more hires faster, and just makes hiring fairer across the board. And, yes, it will also help you meet your diversity goals.

Stop paying for unconscious bias training, and spend that budget on interview practice for your candidates

As you saw above, interview practice is absolutely key to success in technical interviews, but access to practice is not equitably distributed. I contend that tech giants, universities, and bootcamps could close the practice gap, level the playing field in software engineering, and hit our representation goals by simply providing candidates who need them with five professional mock interviews each. Hell, we would do it ourselves if we had the means. Yes, this will cost a few hundred dollars per candidate, but compared to the cost of sourcing and interviewing them, it’s a small change.

Moreover, if you’re spending a significant amount of money on initiatives like unconscious bias training, which has been shown to be ineffective, you could make a much bigger impact by shifting that budget toward interview practice for your candidates.

If you’re an employer, reach out to us so we can help you make this a reality.

Whether you only do some of these things, and whether you do them with us or with other tools and products, let’s stop simply talking about how we need to fix representation in tech and instead do something that actually makes a difference. We can fix the diversity hiring initiatives that have failed us and ensure that the best people actually get hired.

Related posts

Have interviews coming up? Study up on common questions and topics.

We know exactly what to do and say to get the company, title, and salary you want.

Interview prep and job hunting are chaos and pain. We can help. Really.